B2B Data Enrichment: Engineering Agency Growth

Stop guessing. This B2B data enrichment guide helps agency founders build pipeline. Learn frameworks and metrics for effective implementation.

Bad CRM data is not a sales annoyance. It is a revenue systems failure. Gartner reports that poor data quality costs companies $15 million per year on average, and sales reps spend 27% of their time on manual prospect research instead of selling, cited in Databar’s roundup of enrichment tools that references Gartner’s findings (https://databar.ai/blog/article/best-b2b-data-enrichment-tools-in-2025). B2B data enrichment is the operational fix: appending, correcting, validating, and refreshing account and contact records with outside data so your CRM reflects who can buy, what stack they run, and whether they are in market.

If you run a 100 to 500 person software development agency, this matters for one reason. You do not need more leads. You need fewer wrong accounts, fewer dead contacts, better timing, and tighter niche selection. That is what enrichment changes when it is implemented as rev ops infrastructure instead of a one-off list purchase.

The Unspoken Cost of Stale CRM Data

The market size tells you this is no longer a back-office cleanup task. MarketsandMarkets, cited by SuperAGI, says the b2b data enrichment market was valued at $1.1 billion in 2020 and is projected to reach $3.4 billion by 2025, a 20.5% CAGR (https://web.superagi.com/2025-b2b-data-enrichment-trends-how-real-time-api-integration-is-revolutionizing-sales/). Buyers do not fund that kind of market growth to make spreadsheets prettier. They fund it because stale CRM data blocks revenue.

A dev agency usually feels this in four places first. SDRs research the same accounts repeatedly. Outbound goes to people who changed roles. Case-study-led messaging misses because the account is on the wrong stack. Reporting lies because the database is full of duplicates, blanks, and old firmographics.

B2B data enrichment fixes that by doing three concrete jobs:

| Function | What changes in the CRM | Why a dev agency should care |

|---|---|---|

| Append | Adds missing fields like industry, role, company details, tech stack, and buying signals | Lets you segment by niche instead of blasting generic outreach |

| Correct | Replaces outdated titles, emails, and company attributes | Stops wasted touches on people who cannot buy |

| Refresh | Re-checks records on an ongoing basis | Keeps targeting usable after org changes and budget shifts |

If you want a plain-English primer on the broader real cost of poor data quality, it is a useful companion to this conversation. The strategic point is simpler. If your CRM cannot tell your team which accounts fit your niche and which stakeholders are reachable right now, your pipeline math is fiction.

Why Your Raw Data Is a Pipeline Killer

Raw data is not neutral. It actively damages pipeline because it pushes your team toward the wrong accounts, the wrong contacts, and the wrong timing.

Gartner’s numbers are the cleanest way to frame the problem. Poor data quality costs companies $15 million per year on average, sales reps spend 27% of their time on manual prospect research, and enriched data platforms deliver 66% higher conversion rates, as summarized by Databar (https://databar.ai/blog/article/best-b2b-data-enrichment-tools-in-2025). For a software agency, that is not abstract. It shows up in missed meetings, slow cycles, and account lists that look large but have very little buying potential.

The waste is operational, not theoretical

An engineer-minded founder should treat this like a throughput problem.

Your team writes outbound for accounts that do not fit your service line. Your reps chase contacts who no longer own the problem. Your marketers cannot build a clean segment for “legacy stack modernization” or “cloud migration in regulated verticals” because the underlying records are incomplete. You think you have a demand problem. You usually have a data precision problem.

The biggest mistake I see is agencies buying intent tools or hiring SDRs before fixing the record layer underneath. That is upside down. If the base data is wrong, better tooling just helps you move faster in the wrong direction.

Why niche authority breaks without enrichment

A dev agency wins faster when it narrows the story. “We help fintech teams migrate aging .NET systems.” “We build Django-based platforms for data-heavy SaaS.” That positioning only works if your data can support account selection and personalized messaging at the same level of specificity.

Without enrichment, you end up with broad filters like employee count and industry. Those fields are too weak on their own. They tell you almost nothing about delivery fit, technical pain, or current buying motion.

With enrichment, you can route outreach based on details that matter:

- Technographic fit tells you whether the account runs the stack you know how to replace, integrate, or optimize.

- Firmographic context tells you whether the company is large enough, mature enough, or structured enough to buy your kind of engagement.

- Contact verification tells you whether the person you are emailing still owns the function.

- Intent and predictive signals tell you whether now is a sensible time to reach out.

If your outbound list is just names, titles, and domains, you are not running pipeline generation. You are running a manual guessing engine.

What better data changes in practice

The practical effect is tighter list quality and less rep thrash.

A clean enrichment layer lets you suppress bad-fit accounts before they hit a sequence. It lets you map multiple stakeholders instead of relying on one random “Head of Engineering” record. It gives your team enough context to reference real stack decisions, hiring patterns, or active research signals.

That is why conversion moves. Not because “data” is magical. Because your team stops contacting the wrong people with generic claims.

Here is the simple founder-level test:

| Symptom | What it usually means | What enrichment fixes |

|---|---|---|

| Good copy, weak reply rates | Wrong contacts or weak segmentation | Verified contacts, better account filters |

| Many meetings, low close rate | Poor-fit accounts entering pipeline | Better firmographic and technographic qualification |

| SDRs complain about list quality | CRM fields are incomplete or stale | Automated append, validation, refresh |

| Niche positioning is not landing | Messaging is right, audience selection is wrong | Intent and tech-based segmentation |

If you already distrust marketing, good. Apply that same skepticism to your CRM. Raw data is one of the biggest hidden reasons agencies stay stuck as interchangeable vendors.

The Four Enrichment Data Types That Matter

Most explanations of b2b data enrichment are useless because they list every possible field and call it strategy. Founders do not need a taxonomy lesson. They need to know which data types help them build a niche, target the right accounts, and get replies from technical buyers.

A decent high-level overview of what is data enrichment can help if you want the baseline definitions. For agency growth, four data types matter most.

B2B Data Enrichment Types for Agency Pipeline

| Data Type | What It Is | Primary Use Case for a Dev Agency | Source Example |

|---|---|---|---|

| Firmographic | Company-level attributes such as industry, size, geography, business model, and organizational context | Narrow target accounts to the companies your delivery model and deal size fit | CRM data, enrichment providers, company websites |

| Technographic | Data on software stack, infrastructure, tools, and platform dependencies | Find accounts running systems your team can migrate, integrate, modernize, or replace | Technographic vendors, public web signals, install detection |

| Intent | Behavioral signs that an account is actively researching a problem or solution category | Time outbound when buying curiosity is visible instead of guessing based on static ICP fit | First-party site behavior, third-party topic research, content engagement |

| Predictive | Scored buying propensity derived from conversion history plus enriched attributes | Prioritize accounts that look both qualified and in-market | ML scoring inside enrichment platforms and rev ops workflows |

Firmographic data filters out impossible deals

Firmographics are basic, but not optional. They answer the first question every founder should ask. Is this account even capable of buying the kind of project we sell?

For agencies, these help eliminate obvious waste. If your team sells custom modernization projects, very small firms without engineering complexity usually do not belong on the list. If you specialize in a regulated niche, the industry field matters because the compliance burden changes the pitch, the stakeholders, and the timeline.

Firmographics do not create pipeline by themselves. They stop garbage from entering the system.

Technographic data is where agency relevance starts

Technographic enrichment is more important for dev agencies than for most B2B companies because your offer is tied directly to the prospect’s stack.

If you sell cloud migration, legacy modernization, platform rebuilds, or integration work, stack details are not “nice context.” They are qualification criteria. A Java monolith, a fragmented Azure setup, an aging CMS, or a heavy AWS footprint all point to different services, risk patterns, and hooks for outreach.

Intent data fixes timing

Intent separates “good account someday” from “worth contacting now.”

Amplemarket’s explanation is the right one for agency outbound. Technographic enrichment combined with behavioral intent data such as pricing page visits or solution-specific content downloads enables segmentation that improves reply rates from sub-1% to 10-20% (https://www.amplemarket.com/blog/the-power-of-data-b2b-data-enrichment-explained). That kind of lift comes from relevance plus timing, not from writing cuter emails.

A practical example: a prospect visiting pricing pages after reading content about API integration issues is a different lead from a static list record with the same job title.

Predictive data helps reps stop guessing

Predictive enrichment is where the stack gets useful for prioritization. Datamatics explains it well: predictive enrichment identifies accounts actively in-market by analyzing signals like technographic compatibility and intent indicators, and those accounts typically convert at 3-5x higher rates than accounts selected through firmographic matching alone (https://www.datamaticsbpm.com/blog/complete-guide-on-when-and-how-to-do-data-enrichment/).

That matters because most agencies still rank accounts with crude rules such as vertical + headcount + title. That is not enough.

A founder does not need more TAM. A founder needs a ranked list of accounts that fit the niche, run the right stack, and show signs of active demand.

The right way to think about these four layers is simple:

| Layer | What question it answers |

|---|---|

| Firmographic | Can they buy? |

| Technographic | Do they fit our delivery edge? |

| Intent | Is now a sensible time to talk? |

| Predictive | Which account should the team contact first? |

That stack gives your SDRs and marketers something they rarely have. A reason to contact this account now, with this message, instead of generic outreach to everyone who vaguely matches your ICP.

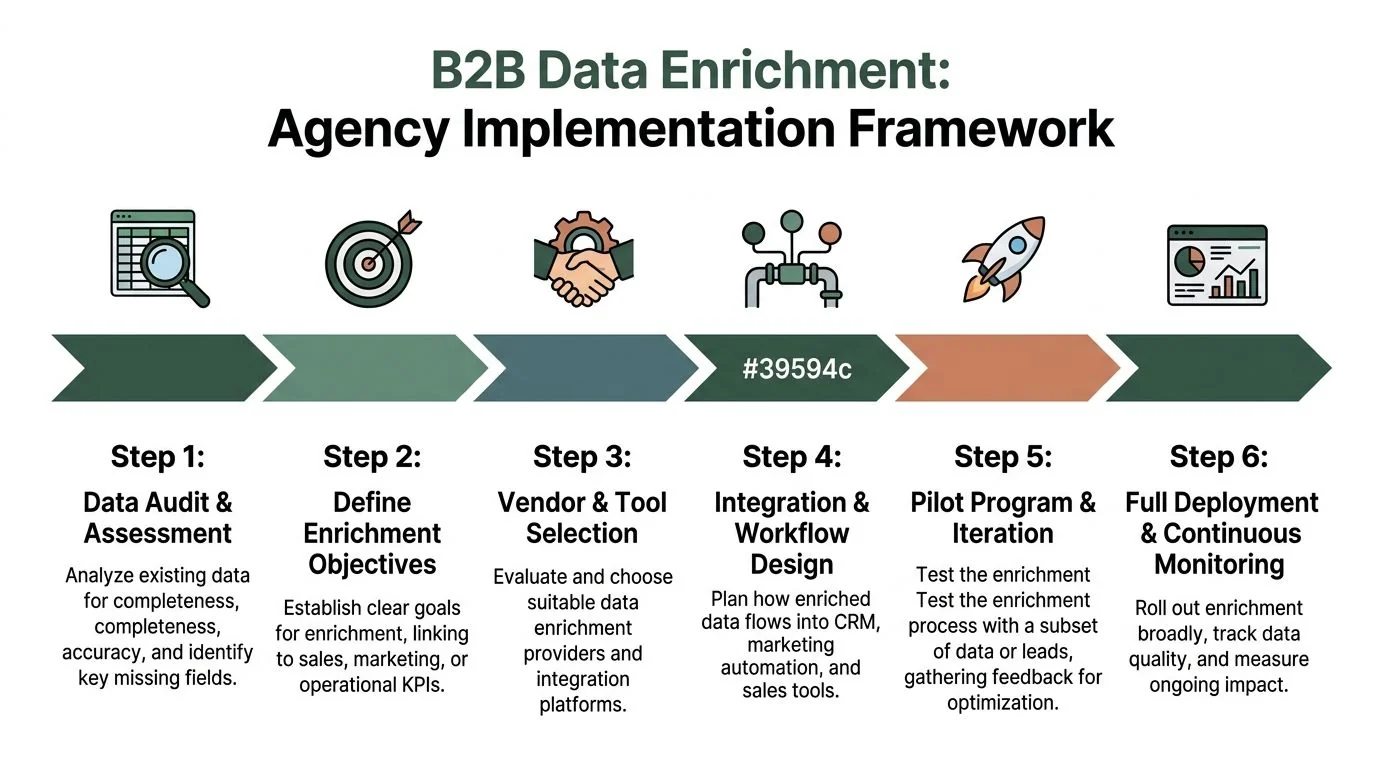

The Agency-Focused Implementation Framework

Most agencies implement enrichment backwards. They buy a vendor, dump data into the CRM, then wonder why nobody trusts the output. The correct sequence is operational. Audit first, define rules, wire the flow, then score and activate.

Start with a field-level audit

Do not begin with vendor demos. Begin with your CRM schema.

Pull a sample of accounts, contacts, and opportunities. Check which fields are complete, which are inconsistent, and which the team uses. Most agencies discover that a large share of fields are either blank, free-text chaos, or stale copies from old imports.

The minimum audit should answer:

- Which fields drive targeting. Industry, service line fit, geography, role, account owner.

- Which fields drive personalization. Tech stack, hiring context, recent company changes, content engagement.

- Which fields drive routing and reporting. Lifecycle stage, source, campaign association, account status.

If a field does not influence targeting, routing, or reporting, stop enriching it.

Use waterfall enrichment to control cost

A waterfall model means you query data sources in sequence until the record meets your completeness and confidence threshold. Not every record deserves the same spend.

A practical waterfall for a dev agency usually looks like this:

| Stage | Record type | Recommended method | Reason | |---|---|---| | First pass | Existing CRM database | Batch enrichment | Clean large volumes cheaply and standardize fields | | Second pass | Named target accounts | Higher-confidence provider check | Fill priority account gaps before outbound | | Third pass | Inbound demo or hand-raiser | Real-time API enrichment | Speed matters more than batch efficiency | | Fourth pass | Strategic open opportunities | Manual research plus validation | High-value deals justify human review |

Founders should think like engineers here. You do not run premium lookups on every record. You reserve the most expensive checks for the records closest to revenue.

Split real-time and batch processing properly

Batch processing is for database maintenance. Real-time enrichment is for moments where speed changes action.

Use batch jobs when you are cleaning a large CRM segment, rebuilding account lists, or normalizing historical records. Use real-time API enrichment when a new lead enters a form, a target account hits an intent threshold, or an SDR opens a record before outreach.

The implementation detail that matters is field mapping. If different tools write company size, industry, or title values into different formats, your CRM will become less usable after enrichment than before it.

A sane build uses:

- Standardized picklists for industry, segment, geography, and account tier

- Controlled write rules so one source cannot overwrite trusted values without validation

- Confidence flags on appended fields when the tool provides them

- Suppression logic for low-confidence or conflicting records

Set governance before rollout

Most CRM chaos is a governance failure, not a vendor failure.

Assign one owner for enrichment rules. Usually that sits in rev ops, not sales and not marketing. Sales should not decide field definitions ad hoc. Marketing should not import records without validation rules. Founders should care, for list quality either compounds or collapses at this stage.

Use a simple governance model:

| Governance item | Rule |

|---|---|

| Data owner | One rev ops or GTM ops owner approves mappings and overwrite rules |

| Source hierarchy | Define which source wins for each field |

| Minimum confidence | Reject low-confidence data before it lands in active CRM views |

| Refresh policy | Assign cadence by field type and sales use case |

| Audit log | Track source, update date, and changed values |

If your team cannot answer who owns field definitions and overwrite rules, do not buy another enrichment tool. Fix governance first.

Build predictive scoring on top of enriched data

After the field layer is stable, score accounts by buying readiness. Datamatics notes that predictive enrichment uses technographic compatibility and intent indicators to identify in-market accounts, and those accounts convert at a significantly higher rate than accounts selected through firmographic matching alone. That is the right architecture for agency outbound.

A useful account score combines:

- Firmographic fit

- Technographic relevance

- Intent strength

- Contact coverage

- Opportunity context, if the account has prior engagement

Do not overcomplicate the first version. A ranking model that the team trusts is better than a black box nobody uses.

For agencies that want a done-with-you route instead of assembling the whole stack internally, services that combine list building, account mapping, and outbound ops can sit on top of the enrichment layer. One example is https://100signals.com/services/lead-generation/, which packages lead generation for software development companies around niche targeting and enriched list building. Use that kind of service only if you still keep ownership of your schema, scoring logic, and reporting.

Pilot on one niche, not the whole CRM

Do not roll this out across every market at once. Pick one niche where your agency already has delivery proof and a clear offer.

Good pilot conditions:

- one service line

- one vertical or buying problem

- one mapped account list

- one outbound motion

- one accountable owner

Then measure whether enrichment improved targeting, outreach relevance, and pipeline movement compared with the prior state. If the pilot fails, the problem is usually one of three things: poor field design, weak source quality, or lack of enforcement in the workflow.

Vendor Selection and Pricing Models Decoded

The vendor conversation is simpler than most buyers make it. You are choosing between two models. A proprietary database vendor with its own large dataset, or a multi-source aggregator that pulls from several providers and APIs.

The right choice depends less on branding and more on your GTM motion.

Proprietary database versus aggregator

| Model | Strength | Weakness | Best fit for a dev agency |

|---|---|---|---|

| Proprietary database | Consistent UI, broad built-in data, simpler procurement | You are locked into one vendor’s coverage and freshness | Teams that want one system and can live within its data model |

| Multi-source aggregator | More flexibility, better source mixing, stronger fit for waterfall logic | More setup work, more mapping complexity | Agencies that care about refresh control, source-level governance, and niche targeting |

If your outbound depends on highly specific stack data, niche account mapping, or source-by-source validation, aggregators usually fit better. If your team values speed of adoption over flexibility, a single large database may be enough.

Do not confuse convenience with quality. A polished interface can still hide stale records, weak confidence indicators, or thin technographic coverage in the niche you sell into.

Pricing model tells you how the vendor expects you to behave

Ignore the headline package first. Look at the unit economics.

| Pricing model | How it works | Budget risk | Best use case |

|---|---|---|---|

| Per-seat | You pay by user | Gets expensive as SDR, AE, and ops access expands | Small teams with few users and manual workflows |

| Per-record or credit | You pay per enriched contact or account | Penalizes large list building and frequent re-enrichment | Highly selective account-based motions |

| API volume | You pay based on lookups or calls | Costs spike if workflows are poorly scoped | Real-time enrichment and product-led or inbound workflows |

A founder should model cost around behavior, not brochure pricing.

If your team maps a narrow set of target accounts and enriches selectively, credit-based pricing can work. If you need several users in sales, marketing, and ops looking at the same records daily, per-seat can become wasteful fast. If you run real-time enrichment on every form fill, every hand-raiser, and every account change event, API costs need hard controls.

What to test before signing

Use a small evaluation matrix. Not feature bingo.

| Evaluation area | What to inspect |

|---|---|

| Match quality | Does the vendor cover your niche accounts and stakeholder roles well? |

| Freshness handling | Can you see update recency or provenance by field? |

| Technographic depth | Does the stack data support your actual service offers? |

| Governance support | Can you set overwrite rules, confidence thresholds, and source priority? |

| Integration friction | Will it write cleanly into HubSpot, Salesforce, or your warehouse? |

The vendor is not the strategy. The vendor is a component in the pipeline. Buy the one that fits your operating model, not the one with the loudest category claims.

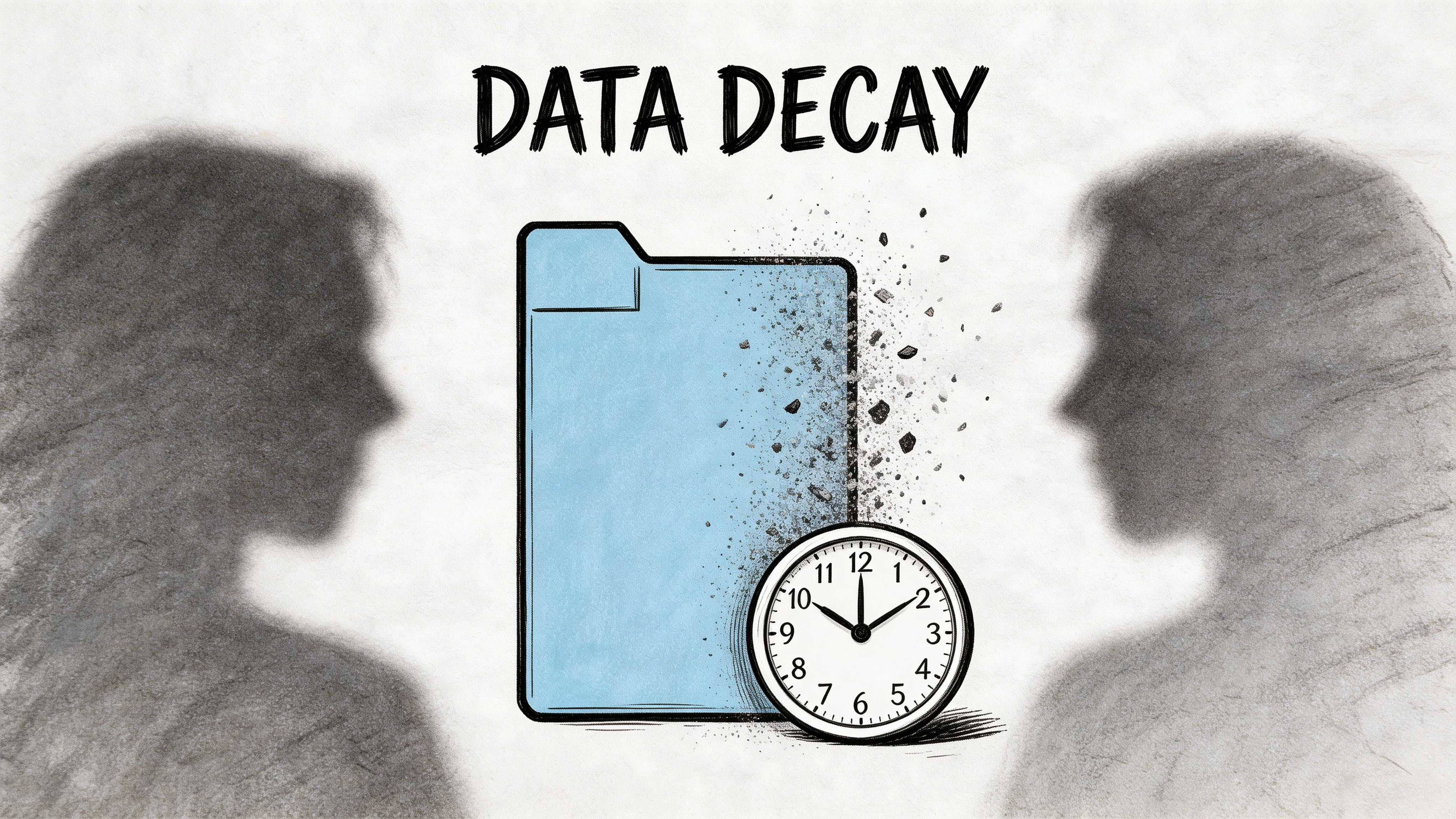

The Two Hidden Costs Nobody Mentions

Most vendor pages sell b2b data enrichment as if you buy it once and keep the benefit forever. That is false. Two costs matter more than the initial contract. Data decay and attribution.

Cleanlist’s guide states that B2B data becomes stale within 3–6 months due to job changes and company pivots, with 30-40% annual data quality loss if it is not actively maintained (https://www.cleanlist.ai/blog/2026-02-20-b2b-data-enrichment-complete-guide). That means a one-time enrichment pass is not a system. It is a temporary patch.

Hidden cost one is refresh burden

Founders usually budget for acquisition of data, not maintenance of data.

The practical consequence is brutal. You enrich a list, launch outreach, and get decent early performance. Then job changes, org reshuffles, and company shifts start eating the list. SDRs think the market got colder. In reality, the data got older.

Use a field-based refresh policy instead of a blanket one:

| Data category | Refresh logic |

|---|---|

| Contact data | Refresh more often because titles, emails, and ownership change fastest |

| Firmographic data | Refresh when account planning or segmentation depends on it |

| Technographic data | Refresh before campaigns tied to migration, integration, or replacement offers |

| Intent signals | Treat as time-sensitive and operational, not archival |

The exact cadence depends on your motion, but the principle is fixed. Refresh the fields that drive action, not every field in the schema.

Hidden cost two is ROI attribution

The second problem is measurement. Vendors love citing benefits. Very few explain how to prove enrichment caused them.

Primer’s critique is useful here. It points out the gap directly: there is almost no practical guidance on how to measure ROI, benchmark before and after, or isolate enrichment’s contribution from the rest of demand gen (https://www.sayprimer.com/blog/the-untapped-potential-of-b2b-data-enrichment).

The fix is not complicated. Build a before-and-after scorecard around metrics enrichment should influence directly.

| Metric | Why it matters |

|---|---|

| Bounce rate | Contact accuracy check |

| Connect rate | Whether verified contacts are reachable |

| Positive reply quality | Whether targeting and context improved |

| SQL conversion | Whether better-fit accounts moved forward |

| Sales cycle movement | Whether account selection and timing improved |

| Rep research time | Whether manual prospecting load dropped |

Measure enrichment like infrastructure. Track the operational metrics it should change first, then connect those gains to pipeline progression.

Do not try to prove everything at once. Compare one niche campaign or one outbound motion before and after enrichment. Hold messaging relatively steady. Keep owner, segment, and process consistent. Then inspect what changed.

That approach will not give you perfect attribution. It will give you useful attribution. For most agency leadership teams, that is enough to decide whether enrichment deserves to become a permanent GTM layer.

Real Agency Use Cases and ROI

Theory matters less than application. Here is what b2b data enrichment looks like when an agency uses it to sharpen account selection and improve timing.

Use case one with niche validation through stack data

A .NET agency wants to own the “legacy financial services app modernization” niche.

They start with a large CRM and several exported lists. Before enrichment, the records are too broad to use. Titles are inconsistent. Industry tags are messy. There is no reliable view of which firms run the kind of environment the agency can modernize.

So they rebuild the target universe around four layers:

- firmographic filtering for financial services relevance and account viability

- technographic checks for stack compatibility

- verified stakeholder mapping across engineering and technology leadership

- intent and engagement triggers for activation priority

The result is not “more leads.” It is a much smaller, cleaner account set that gives the agency a reason to exist in that niche. SDRs can reference real technical context instead of generic cloud language. Content can be written for the stack environment those buyers operate in.

That provides the core ROI. Better strategic compression. Less random outreach.

Use case two with timed outreach

A Python and Django shop wants to break into AI-adjacent product engineering work without sounding like every other vendor.

They watch for intent-style signals and pair those with technographic fit. A target account shows active research around integration and platform capability, and the agency can see enough stack context to know the opportunity is plausible.

The outreach changes because the data changed. Instead of “we help companies build AI products,” the note references the account’s likely architecture constraints, the integration challenge they appear to be researching, and the role of a development partner in shortening implementation risk.

That is where timing turns into meetings.

To see a practical discussion of enrichment and GTM execution, this video is a useful companion:

What ROI should mean for an agency

Do not define ROI only as closed revenue from one campaign. That is too narrow and too delayed.

For agencies, enrichment ROI usually shows up first as:

- better target account precision

- fewer wasted SDR touches

- more credible personalization

- cleaner niche segmentation

- more usable pipeline reporting

If you need one hard benchmark to keep in mind, Amplemarket notes that technographic and intent-layer enrichment can significantly improve outbound reply rates when paired with relevant positioning. That is not a writing trick. It is what happens when the message is sent to the right account, the right contact, at the right time.

From Enriched Data to Defensible Niches

A software agency does not win by owning a giant database. It wins by becoming the obvious choice in a narrow market.

That requires accurate account selection, stack-aware segmentation, stakeholder mapping, and timing signals. In other words, it requires b2b data enrichment as operational infrastructure. Without that layer, niche positioning stays theoretical because your targeting cannot support it.

The bigger strategic payoff is this: Enriched data lets you map a finite set of accounts, understand which ones match your delivery edge, and publish authority around the exact problems those buyers have. It also makes outbound more credible because the contact and account context are specific enough to earn a reply.

If you care about niche ownership, you also need to understand how competitors are positioned, what buyers are seeing, and where whitespace exists. That is where competitive intelligence becomes part of the same system. Data enrichment tells you who to target. Competitive intelligence helps you decide how to position against the other agencies chasing the same budget.

Founders who dismiss this as marketing usually stay stuck competing on referrals, vague capability decks, and price. Founders who treat data quality like GTM infrastructure build a narrower list, a sharper message, and a pipeline that behaves more like an engineered system than a random sequence of lucky deals.